FACEIT, one of the largest independent competitive esports platforms, has partnered with Alphabet-owned Jigsaw to tackle the ongoing issue of toxic behavior in online gaming. Jigsaw and FACEIT have implemented Perspective, a machine-learning AI fine-tuned to weed out harmful comments, on FACEIT’s competitive servers.

FACEIT is a competitive esports platform with over 15 million users, hosting thousands of online matches every single day. Toxic comments, harassment, and spam messages are a common problem within online gaming, including on independent services like FACEIT. In the past, most of FACEIT’s moderation of in-game comments would rely on users reporting an incident and human moderators reviewing the messages before dealing out a punishment. This was not time efficient, and there was no way to ban every harmful individual from the platform.

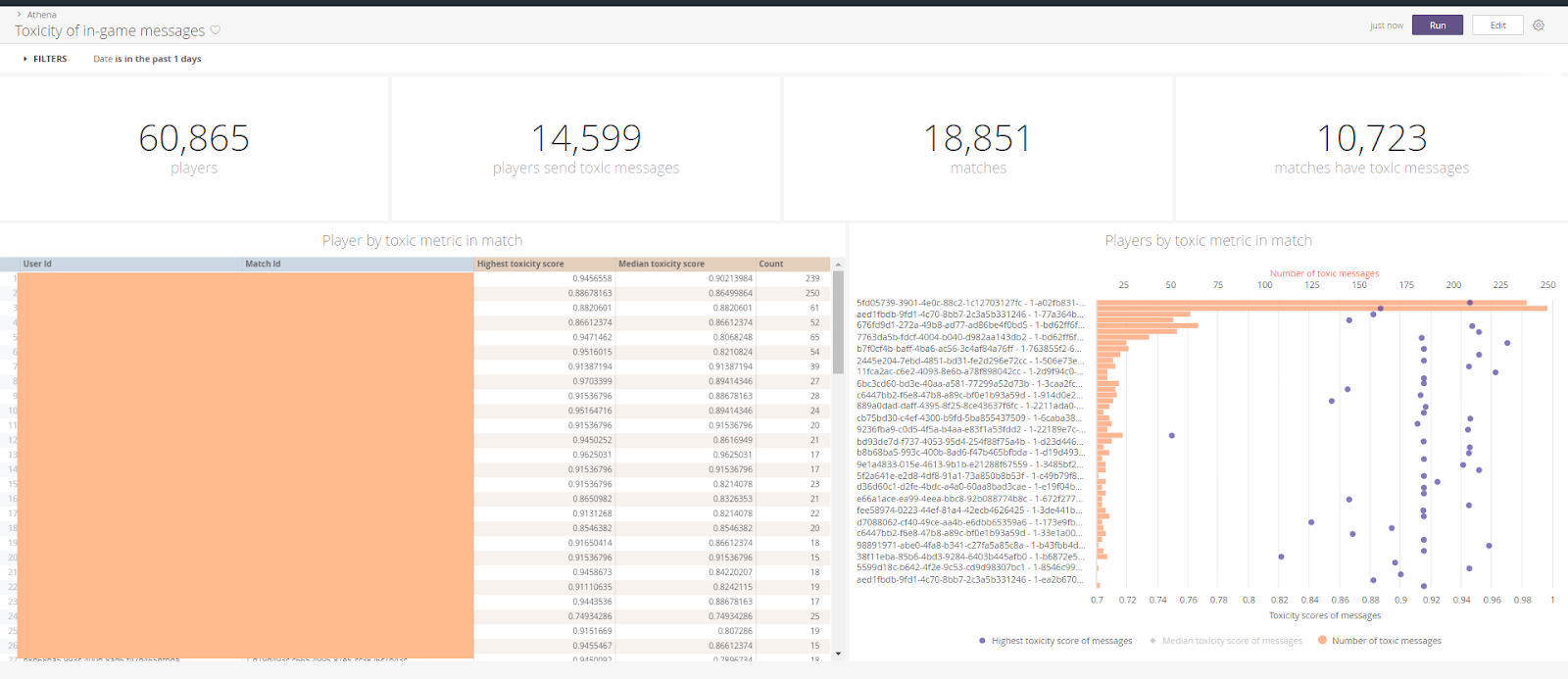

FACEIT has now turned to machine learning to tackle the ongoing issue of harassment in online gaming. Partnering with Alphabet organization Jigsaw, Perspective has been implemented into FACEIT’s competitive platforms. With machine learning technology, Perspective is able to read and learn from toxic messages sent on the platform - and send an appropriate response to any offending players. A “toxicity threshold” can be set, meaning the algorithm is trained to penalize users sending messages considered to be over a certain level of toxicity. In the few weeks since Perspective’s implementation, over 160,000,000 messages have been analyzed and those deemed toxic have been swiftly punished. “Perspective has grown from helping small and large publishers to being applicable to a really wide range of industries. Esports and gaming is unique because the pace and content are very different than what you would find on a news site,” says Lucy Vasserman, Manager and Technical Lead on the Conversation AI team.

FACEIT reports a 7% decrease in comments over the set “very high” toxicity threshold, and a 20% decrease in toxic messages overall. FACEIT is reportedly catching the top 5% most harmful individuals on the platform, resulting in around 1,000 users warned of their behavior every day.

In an effort to avoid false bans and overlooked toxicity, Perspective has not fully taken over the moderation process. FACEIT continues to review the analyzed messages to gauge Perspective’s accuracy. “FACEIT has done a wonderful job of balancing the need for immediate response while still keeping humans in the loop, by allowing flagged players to request review by the moderator team after the game has ended,” Vasserman states, “The gaming industry is so dynamic and fast moving that it was exciting to see how our technology could keep up and help gamers get real-time feedback on their messages.”

At the moment, Perspective can only target written messages sent in-game. The team acknowledges that toxic behavior can be expressed in a multitude of ways, including over voice chat, griefing, cheating, and many others that cannot be seen over in-game text. FACEIT plans to grow and evolve their moderation process, with Perspective being the first move towards a “bigger initiative.”